TextBlob是什么?

TextBlob是一个用Python编写的开源的文本处理库。它可以用来执行很多自然语言处理的任务,比如,词性标注,名词性成分提取,情感分析,文本翻译,等等。你可以在官方文档阅读TextBlog的所有特性。

github 地址:https://github.com/sloria/TextBlob/

文档地址:https://textblob.readthedocs.io/en/dev/

为什么我要关心TextBlob?

我学习TextBlob的原因如下:

-

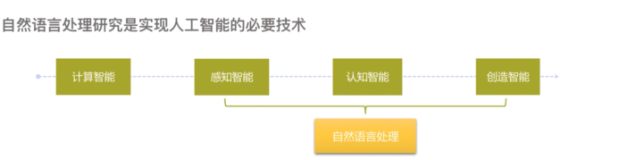

我想开发需要进行文本处理的应用。我们给应用添加文本处理功能之后,应用能更好地理解人们的行为,因而显得更加人性化。文本处理很难做对。TextBlob站在巨人的肩膀上(NTLK),NLTK是创建处理自然语言的Python程序的最佳选择。

-

我想学习下如何用 Python 进行文本处理。

安装 TextBlob

$ pip install -U textblob $ python -m textblob.download_corpora # 下载nltk数据包,如果已经在nltk 安装的时候下载好了nltk数据包,不需要此步骤

快速开始:

Create a TextBlob(创建一个textblob对象)

First, the import.

>>> from textblob import TextBlob

Let’s create our first TextBlob.

>>> wiki = TextBlob("Python is a high-level, general-purpose programming language.")

Part-of-speech Tagging(词性标注)

Part-of-speech tags can be accessed through the tags property.

>>> wiki.tags

[('Python', 'NNP'), ('is', 'VBZ'), ('a', 'DT'), ('high-level', 'JJ'), ('general-purpose', 'JJ'), ('programming', 'NN'), ('language', 'NN')]

Noun Phrase Extraction(名词短语列表)

Similarly, noun phrases are accessed through the noun_phrases property.

>>> wiki.noun_phrases

WordList(['python'])

Sentiment Analysis(情感分析)

The sentiment property returns a namedtuple of the form Sentiment(polarity, subjectivity). The polarity score is a float within the range [-1.0, 1.0]. The subjectivity is a float within the range [0.0, 1.0] where 0.0 is very objective and 1.0 is very subjective.

>>> testimonial = TextBlob("Textblob is amazingly simple to use. What great fun!")

>>> testimonial.sentiment

Sentiment(polarity=0.39166666666666666, subjectivity=0.4357142857142857)

>>> testimonial.sentiment.polarity

0.39166666666666666

Tokenization(分词和分句)

You can break TextBlobs into words or sentences.

>>> zen = TextBlob("Beautiful is better than ugly. "

... "Explicit is better than implicit. "

... "Simple is better than complex.")

>>> zen.words

WordList(['Beautiful', 'is', 'better', 'than', 'ugly', 'Explicit', 'is', 'better', 'than', 'implicit', 'Simple', 'is', 'better', 'than', 'complex'])

>>> zen.sentences

[Sentence("Beautiful is better than ugly."), Sentence("Explicit is better than implicit."), Sentence("Simple is better than complex.")]

Sentence objects have the same properties and methods as TextBlobs.

>>> for sentence in zen.sentences:

... print(sentence.sentiment)

For more advanced tokenization, see the Advanced Usage guide.

Words Inflection and Lemmatization(词反射及词干提取:单复数过去式等)

Each word in TextBlob.words or Sentence.words is a Word object (a subclass of unicode) with useful methods, e.g. for word inflection.

>>> sentence = TextBlob('Use 4 spaces per indentation level.')

>>> sentence.words

WordList(['Use', '4', 'spaces', 'per', 'indentation', 'level'])

>>> sentence.words[2].singularize()

'space'

>>> sentence.words[-1].pluralize()

'levels'

Words can be lemmatized by calling the lemmatize method.

>>> from textblob import Word

>>> w = Word("octopi")

>>> w.lemmatize()

'octopus'

>>> w = Word("went")

>>> w.lemmatize("v") # Pass in part of speech (verb)

'go'

WordNet Integration

You can access the synsets for a Word via the synsets property or the get_synsets method, optionally passing in a part of speech.

>>> from textblob import Word

>>> from textblob.wordnet import VERB

>>> word = Word("octopus")

>>> word.synsets

[Synset('octopus.n.01'), Synset('octopus.n.02')]

>>> Word("hack").get_synsets(pos=VERB)

[Synset('chop.v.05'), Synset('hack.v.02'), Synset('hack.v.03'), Synset('hack.v.04'), Synset('hack.v.05'), Synset('hack.v.06'), Synset('hack.v.07'), Synset('hack.v.08')]

You can access the definitions for each synset via the definitions property or the define()method, which can also take an optional part-of-speech argument.

>>> Word("octopus").definitions

['tentacles of octopus prepared as food', 'bottom-living cephalopod having a soft oval body with eight long tentacles']

You can also create synsets directly.

>>> from textblob.wordnet import Synset

>>> octopus = Synset('octopus.n.02')

>>> shrimp = Synset('shrimp.n.03')

>>> octopus.path_similarity(shrimp)

0.1111111111111111

For more information on the WordNet API, see the NLTK documentation on the Wordnet Interface.

WordLists

A WordList is just a Python list with additional methods.

>>> animals = TextBlob("cat dog octopus")

>>> animals.words

WordList(['cat', 'dog', 'octopus'])

>>> animals.words.pluralize()

WordList(['cats', 'dogs', 'octopodes'])

Spelling Correction(拼写校正)

Use the correct() method to attempt spelling correction.

>>> b = TextBlob("I havv goood speling!")

>>> print(b.correct())

I have good spelling!

Word objects have a spellcheck() Word.spellcheck() method that returns a list of (word,confidence) tuples with spelling suggestions.

>>> from textblob import Word

>>> w = Word('falibility')

>>> w.spellcheck()

[('fallibility', 1.0)]

Spelling correction is based on Peter Norvig’s “How to Write a Spelling Corrector”[1] as implemented in the pattern library. It is about 70% accurate [2].

Get Word and Noun Phrase Frequencies(单词词频)

There are two ways to get the frequency of a word or noun phrase in a TextBlob.

The first is through the word_counts dictionary.

>>> monty = TextBlob("We are no longer the Knights who say Ni. "

... "We are now the Knights who say Ekki ekki ekki PTANG.")

>>> monty.word_counts['ekki']

3

If you access the frequencies this way, the search will not be case sensitive, and words that are not found will have a frequency of 0.

The second way is to use the count() method.

>>> monty.words.count('ekki')

3

You can specify whether or not the search should be case-sensitive (default is False).

>>> monty.words.count('ekki', case_sensitive=True)

2

Each of these methods can also be used with noun phrases.

>>> wiki.noun_phrases.count('python')

1

Translation and Language Detection(翻译及语言检测语言)

New in version 0.5.0.

TextBlobs can be translated between languages.

>>> en_blob = TextBlob(u'Simple is better than complex.')

>>> en_blob.translate(to='es')

TextBlob("Simple es mejor que complejo.")

If no source language is specified, TextBlob will attempt to detect the language. You can specify the source language explicitly, like so. Raises TranslatorError if the TextBlob cannot be translated into the requested language or NotTranslated if the translated result is the same as the input string.

>>> chinese_blob = TextBlob(u"美丽优于丑陋")

>>> chinese_blob.translate(from_lang="zh-CN", to='en')

TextBlob("Beautiful is better than ugly")

You can also attempt to detect a TextBlob’s language using TextBlob.detect_language().

>>> b = TextBlob(u"بسيط هو أفضل من مجمع")

>>> b.detect_language()

'ar'

As a reference, language codes can be found here.

Language translation and detection is powered by the Google Translate API.

Parsing(解析)

Use the parse() method to parse the text.

>>> b = TextBlob("And now for something completely different.")

>>> print(b.parse())

And/CC/O/O now/RB/B-ADVP/O for/IN/B-PP/B-PNP something/NN/B-NP/I-PNP completely/RB/B-ADJP/O different/JJ/I-ADJP/O ././O/O

By default, TextBlob uses pattern’s parser [3].

TextBlobs Are Like Python Strings!(TextBlobs像是字符串)

You can use Python’s substring syntax.

>>> zen[0:19]

TextBlob("Beautiful is better")

You can use common string methods.

>>> zen.upper()

TextBlob("BEAUTIFUL IS BETTER THAN UGLY. EXPLICIT IS BETTER THAN IMPLICIT. SIMPLE IS BETTER THAN COMPLEX.")

>>> zen.find("Simple")

65

You can make comparisons between TextBlobs and strings.

>>> apple_blob = TextBlob('apples')

>>> banana_blob = TextBlob('bananas')

>>> apple_blob < banana_blob

True

>>> apple_blob == 'apples'

True

You can concatenate and interpolate TextBlobs and strings.

>>> apple_blob + ' and ' + banana_blob

TextBlob("apples and bananas")

>>> "{0} and {1}".format(apple_blob, banana_blob)

'apples and bananas'

n-grams(提取前n个字)

The TextBlob.ngrams() method returns a list of tuples of n successive words.

>>> blob = TextBlob("Now is better than never.")

>>> blob.ngrams(n=3)

[WordList(['Now', 'is', 'better']), WordList(['is', 'better', 'than']), WordList(['better', 'than', 'never'])]

Get Start and End Indices of Sentences(句子开始和结束的索引)

Use sentence.start and sentence.end to get the indices where a sentence starts and ends within a TextBlob.

>>> for s in zen.sentences:

... print(s)

... print("---- Starts at index {}, Ends at index {}".format(s.start, s.end))

Beautiful is better than ugly.

---- Starts at index 0, Ends at index 30

Explicit is better than implicit.

---- Starts at index 31, Ends at index 64

Simple is better than complex.

---- Starts at index 65, Ends at index 95

文档

TextBlob is a Python (2 and 3) library for processing textual data. It provides a simple API for diving into common natural language processing (NLP) tasks such as part-of-speech tagging, noun phrase extraction, sentiment analysis, classification, translation, and more.

from textblob import TextBlob

text = '''

The titular threat of The Blob has always struck me as the ultimate movie

monster: an insatiably hungry, amoeba-like mass able to penetrate

virtually any safeguard, capable of--as a doomed doctor chillingly

describes it--"assimilating flesh on contact.

Snide comparisons to gelatin be damned, it's a concept with the most

devastating of potential consequences, not unlike the grey goo scenario

proposed by technological theorists fearful of

artificial intelligence run rampant.

'''

blob = TextBlob(text)

blob.tags # [('The', 'DT'), ('titular', 'JJ'),

# ('threat', 'NN'), ('of', 'IN'), ...]

blob.noun_phrases # WordList(['titular threat', 'blob',

# 'ultimate movie monster',

# 'amoeba-like mass', ...])

for sentence in blob.sentences:

print(sentence.sentiment.polarity)

# 0.060

# -0.341

blob.translate(to="es") # 'La amenaza titular de The Blob...

TextBlob stands on the giant shoulders of NLTK and pattern, and plays nicely with both.

Features

- Noun phrase extraction

- Part-of-speech tagging

- Sentiment analysis

- Classification (Naive Bayes, Decision Tree)

- Language translation and detection powered by Google Translate

- Tokenization (splitting text into words and sentences)

- Word and phrase frequencies

- Parsing

- n-grams

- Word inflection (pluralization and singularization) and lemmatization

- Spelling correction

- Add new models or languages through extensions

- WordNet integration

Get it now

$ pip install -U textblob $ python -m textblob.download_corpora # 下载nltk数据包,如果已经在nltk 安装的时候下载好了nltk数据包,不需要此步骤

Examples

See more examples at the Quickstart guide.

Documentation

Full documentation is available at https://textblob.readthedocs.io/.

Requirements

- Python >= 2.7 or >= 3.3

Project Links

- Docs: https://textblob.readthedocs.io/

- Changelog: https://textblob.readthedocs.io/en/latest/changelog.html

- PyPI: https://pypi.python.org/pypi/TextBlob

- Issues: https://github.com/sloria/TextBlob/issues