坏境说明:

192.168.111.117 huaicong-1 master

192.168.111.123 huaicong-2 slave

192.168.111.184 huaicong-3 slave 配置互信

生成ssh 密钥对

[root@huaicong-1 ~]# ssh-keygen

把本地的ssh公钥文件安装到远程主机对应的账户

[root@huaicong-1 ~]# ssh-copy-id huaicong-1

[root@huaicong-1 ~]# ssh-copy-id huaicong-2

[root@huaicong-1 ~]# ssh-copy-id huaicong-3 关闭防火墙&&关闭selinux

(关闭selinux 需要重启机器)

[root@huaicong-1 ~]# systemctl stop firewalld [root@huaicong-1 ~]# systemctl disable firewalld

[root@huaicong-1 ~]# cat /etc/selinux/config # This file controls the state of SELinux on the system. # SELINUX= can take one of these three values: # enforcing - SELinux security policy is enforced. # permissive - SELinux prints warnings instead of enforcing. # disabled - No SELinux policy is loaded. SELINUX=disabled

# SELINUXTYPE= can take one of three two values: # targeted - Targeted processes are protected, # minimum - Modification of targeted policy. Only selected processes are protected. # mls - Multi Level Security protection. SELINUXTYPE=targeted开始安装

所有节点操作

链接:https://pan.baidu.com/s/1ExMT... 密码:otpq

下载相关rpm 包还有相关 docker images. 并解压

[root@huaicong-1 ~]# tar -zxvf k8s_images.tar.gz安装docker-ce,解决依赖

[root@huaicong-1 k8s_images]# rpm -ivh libtool-ltdl-2.4.2-22.el7_3.x86_64.rpm libxml2-python-2.9.1-6.el7_2.3.x86_64.rpm libseccomp-2.3.1-3.el7.x86_64.rpm [root@huaicong-1 k8s_images]# rpm -ivh docker-ce-selinux-17.03.2.ce-1.el7.centos.noarch.rpm [root@huaicong-1 k8s_images]# rpm -ivh docker-ce-17.03.2.ce-1.el7.centos.x86_64.rpm 修改docker的镜像源为国内的daocloud的。

curl -sSL https://get.daocloud.io/daotools/set_mirror.sh | sh -s http://a58c8480.m.daocloud.io启动docker,并设置开机启动

[root@huaicong-1 ~]# systemctl start docker;systemctl enable docker配置系统路由参数,防止kubeadm报路由警告

echo "

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

" >> /etc/sysctl.conf

sysctl -p安装kubadm kubelet kubectl

[root@huaicong-1 k8s_images]# rpm -ivh kubectl-1.9.0-0.x86_64.rpm kubeadm-1.9.0-0.x86_64.rpm kubelet-1.9.9-9.x86_64.rpm \

kubernetes-cni-0.6.0-0.x86_64.rpm socat-1.7.3.2-2.el7.x86_64.rpm 加载镜像

[root@huaicong-1 ~]# cd k8s_images/docker_images/

[root@huaicong-1 docker_images]# ll

total 999696

-rw-r--r-- 1 root root 192984064 Dec 26 11:32 etcd-amd64_v3.1.10.tar

-rw-r--r-- 1 root root 52185600 Dec 27 20:36 flannel:v0.9.1-amd64.tar

-rw-r--r-- 1 root root 41241088 Dec 27 20:34 k8s-dns-dnsmasq-nanny-amd64_v1.14.7.tar

-rw-r--r-- 1 root root 50545152 Dec 27 23:02 k8s-dns-kube-dns-amd64_1.14.7.tar

-rw-r--r-- 1 root root 42302976 Dec 27 22:59 k8s-dns-sidecar-amd64_1.14.7.tar

-rw-r--r-- 1 root root 210598400 Dec 26 11:28 kube-apiserver-amd64_v1.9.0.tar

-rw-r--r-- 1 root root 137975296 Dec 26 11:30 kube-controller-manager-amd64_v1.9.0.tar

-rw-r--r-- 1 root root 110955520 Dec 29 13:13 kube-proxy-amd64_v1.9.0.tar

-rw-r--r-- 1 root root 121195008 Jan 1 20:24 kubernetes-dashboard_v1.8.1.tar

-rw-r--r-- 1 root root 62920704 Dec 26 11:30 kube-scheduler-amd64_v1.9.0.tar

-rw-r--r-- 1 root root 765440 Dec 27 20:32 pause-amd64_3.0.tar

[root@huaicong-1 docker_images]# for image in `ls -l . |awk '{print $9}'`;do echo "$image is loading"&&docker load < ${image};donemaster 节点操作

#启动kubelet

[root@huaicong-1 ~]# systemctl start kubelet&& systemctl enable kubelet开始初始化master节点

kubernetes 默认支持多重网络插件如flannel、weave、calico,这里使用flannel,就必须设置--pod-network-cidr 参数,

[root@huaicong-1 ~]# kubeadm init --kubernetes-version=v1.9.0 --pod-network-cidr=10.224.0.0/16Jan 20 15:22:53 huaicong-1 kubelet: E0120 15:22:53.551735 24627 reflector.go:205] k8s.io/kubernetes/pkg/kubelet/config/apiserver.go:47: Failed to list *v1.Pod: Get https://192.168.111.117:6443/api/v1/pods?fieldSelector=spec.nodeName%3Dhuaicong-1.novalocal&limit=500&resourceVersion=0: dial tcp 192.168.111.117:6443: getsockopt: connection refused

Jan 20 15:22:53 huaicong-1 kubelet: E0120 15:22:53.551845 24627 reflector.go:205] k8s.io/kubernetes/pkg/kubelet/kubelet.go:474: Failed to list *v1.Node: Get https://192.168.111.117:6443/api/v1/nodes?fieldSelector=metadata.name%3Dhuaicong-1.novalocal&limit=500&resourceVersion=0: dial tcp 192.168.111.117:6443: getsockopt: connection refused

Jan 20 15:22:53 huaicong-1 kubelet: W0120 15:22:53.557159 24627 kubelet_network.go:139] Hairpin mode set to "promiscuous-bridge" but kubenet is not enabled, falling back to "hairpin-veth"

Jan 20 15:22:53 huaicong-1 kubelet: I0120 15:22:53.557178 24627 kubelet.go:571] Hairpin mode set to "hairpin-veth"

Jan 20 15:22:53 huaicong-1 kubelet: W0120 15:22:53.557212 24627 cni.go:171] Unable to update cni config: No networks found in /etc/cni/net.d

Jan 20 15:22:53 huaicong-1 kubelet: I0120 15:22:53.557240 24627 client.go:80] Connecting to docker on unix:///var/run/docker.sock

Jan 20 15:22:53 huaicong-1 kubelet: I0120 15:22:53.557249 24627 client.go:109] Start docker client with request timeout=2m0s

Jan 20 15:22:53 huaicong-1 kubelet: W0120 15:22:53.559152 24627 cni.go:171] Unable to update cni config: No networks found in /etc/cni/net.d

Jan 20 15:22:53 huaicong-1 kubelet: W0120 15:22:53.581459 24627 cni.go:171] Unable to update cni config: No networks found in /etc/cni/net.d

Jan 20 15:22:53 huaicong-1 kubelet: I0120 15:22:53.581544 24627 docker_service.go:232] Docker cri networking managed by cni

Jan 20 15:22:53 huaicong-1 kubelet: I0120 15:22:53.599739 24627 docker_service.go:237] Docker Info: &{ID:FMCL:A6AR:LAHK:SS2I:2SJI:ZY73:HYS4:DRRU:AJI4:JOQM:L5XK:ZGLK Containers:0 ContainersRunning:0 ContainersPaused:0 ContainersStopped:0 Images:11 Driver:overlay DriverStatus:[[Backing Filesystem xfs] [Supports d_type true]] SystemStatus:[] Plugins:{Volume:[local] Network:[bridge host macvlan null overlay] Authorization:[] Log:[]} MemoryLimit:true SwapLimit:true KernelMemory:true CPUCfsPeriod:true CPUCfsQuota:true CPUShares:true CPUSet:true IPv4Forwarding:true BridgeNfIptables:true BridgeNfIP6tables:true Debug:false NFd:16 OomKillDisable:true NGoroutines:22 SystemTime:2018-01-20T15:22:53.586684761+08:00 LoggingDriver:json-file CgroupDriver:cgroupfs NEventsListener:0 KernelVersion:3.10.0-514.el7.x86_64 OperatingSystem:CentOS Linux 7 (Core) OSType:linux Architecture:x86_64 IndexServerAddress:https://index.docker.io/v1/ RegistryConfig:0xc420e63960 NCPU:2 MemTotal:3975086080 GenericResources:[] DockerRootDir:/var/lib/docker HTTPProxy: HTTPSProxy: NoProxy: Name:huaicong-1.novalocal Labels:[] ExperimentalBuild:false ServerVersion:17.03.2-ce ClusterStore: ClusterAdvertise: Runtimes:map[runc:{Path:docker-runc Args:[]}] DefaultRuntime:runc Swarm:{NodeID: NodeAddr: LocalNodeState:inactive ControlAvailable:false Error: RemoteManagers:[] Nodes:0 Managers:0 Cluster:0xc4201cb400} LiveRestoreEnabled:false Isolation: InitBinary:docker-init ContainerdCommit:{ID:4ab9917febca54791c5f071a9d1f404867857fcc Expected:4ab9917febca54791c5f071a9d1f404867857fcc} RuncCommit:{ID:54296cf40ad8143b62dbcaa1d90e520a2136ddfe Expected:54296cf40ad8143b62dbcaa1d90e520a2136ddfe} InitCommit:{ID:949e6fa Expected:949e6fa} SecurityOptions:[name=seccomp,profile=default]}

Jan 20 15:22:53 huaicong-1 kubelet: error: failed to run Kubelet: failed to create kubelet: misconfiguration: kubelet cgroup driver: "systemd" is different from docker cgroup driver: "cgroupfs"

Jan 20 15:22:53 huaicong-1 systemd: kubelet.service: main process exited, code=exited, status=1/FAILURE

Jan 20 15:22:53 huaicong-1 systemd: Unit kubelet.service entered failed state.

Jan 20 15:22:53 huaicong-1 systemd: kubelet.service failed.查看日志发现启动不了

原来是kubelet 的cgroup dirver 与 docker的不一样。docker默认使用cgroupfs,keubelet 默认使用systemd。

[root@huaicong-1 ~]# vim /etc/systemd/system/kubelet.service.d/10-kubeadm.conf

[Service] Environment="KUBELET_KUBECONFIG_ARGS=--bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf --kubeconfig=/etc/kubernetes/kubelet.conf" Environment="KUBELET_SYSTEM_PODS_ARGS=--pod-manifest-path=/etc/kubernetes/manifests --allow-privileged=true" Environment="KUBELET_NETWORK_ARGS=--network-plugin=cni --cni-conf-dir=/etc/cni/net.d --cni-bin-dir=/opt/cni/bin" Environment="KUBELET_DNS_ARGS=--cluster-dns=10.96.0.10 --cluster-domain=cluster.local" Environment="KUBELET_AUTHZ_ARGS=--authorization-mode=Webhook --client-ca-file=/etc/kubernetes/pki/ca.crt" Environment="KUBELET_CADVISOR_ARGS=--cadvisor-port=0" Environment="KUBELET_CGROUP_ARGS=--cgroup-driver=cgroupfs" Environment="KUBELET_CERTIFICATE_ARGS=--rotate-certificates=true --cert-dir=/var/lib/kubelet/pki" ExecStart=

ExecStart=/usr/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_SYSTEM_PODS_ARGS $KUBELET_NETWORK_ARGS $KUBELET_DNS_ARGS $KUBELET_AUTHZ_ARGS $KUBELET_CADVISOR_ARGS $KUBELET_CGROUP_ARGS $KUBELET_CERTIFICATE_ARGS $KUBELET_EXTRA_ARGSdadmon-reload && systemctl restart kubelet

[root@huaicong-1 ~]# kubeadm init --kubernetes-version=v1.9.0 --pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.9.0

[init] Using Authorization modes: [Node RBAC]

[preflight] Running pre-flight checks.

[WARNING Hostname]: hostname "huaicong-1.novalocal" could not be reached

[WARNING Hostname]: hostname "huaicong-1.novalocal" lookup huaicong-1.novalocal on 8.8.4.4:53: no such host

[WARNING FileExisting-crictl]: crictl not found in system path

[preflight] Starting the kubelet service

[certificates] Generated ca certificate and key.

[certificates] Generated apiserver certificate and key.

[certificates] apiserver serving cert is signed for DNS names [huaicong-1.novalocal kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.111.117]

[certificates] Generated apiserver-kubelet-client certificate and key.

[certificates] Generated sa key and public key.

[certificates] Generated front-proxy-ca certificate and key.

[certificates] Generated front-proxy-client certificate and key.

[certificates] Valid certificates and keys now exist in "/etc/kubernetes/pki"

[kubeconfig] Wrote KubeConfig file to disk: "admin.conf"

[kubeconfig] Wrote KubeConfig file to disk: "kubelet.conf"

[kubeconfig] Wrote KubeConfig file to disk: "controller-manager.conf"

[kubeconfig] Wrote KubeConfig file to disk: "scheduler.conf"

[controlplane] Wrote Static Pod manifest for component kube-apiserver to "/etc/kubernetes/manifests/kube-apiserver.yaml"

[controlplane] Wrote Static Pod manifest for component kube-controller-manager to "/etc/kubernetes/manifests/kube-controller-manager.yaml"

[controlplane] Wrote Static Pod manifest for component kube-scheduler to "/etc/kubernetes/manifests/kube-scheduler.yaml"

[etcd] Wrote Static Pod manifest for a local etcd instance to "/etc/kubernetes/manifests/etcd.yaml"

[init] Waiting for the kubelet to boot up the control plane as Static Pods from directory "/etc/kubernetes/manifests".

[init] This might take a minute or longer if the control plane images have to be pulled.

[apiclient] All control plane components are healthy after 30.001793 seconds

[uploadconfig] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[markmaster] Will mark node huaicong-1.novalocal as master by adding a label and a taint

[markmaster] Master huaicong-1.novalocal tainted and labelled with key/value: node-role.kubernetes.io/master=""

[bootstraptoken] Using token: 7529d9.235a9be16773cad7

[bootstraptoken] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstraptoken] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstraptoken] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstraptoken] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: kube-dns

[addons] Applied essential addon: kube-proxy

Your Kubernetes master has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of machines by running the following on each node

as root:

kubeadm join --token 7529d9.235a9be16773cad7 192.168.111.117:6443 --discovery-token-ca-cert-hash sha256:5cde22b6b719d1af19c711b87016163baafd38e5588786ebd4a2b975a07439fdkubeadm join xxxx 可以保留下来,如果忘记了,可以通过kubeadm token list 获取

[root@huaicong-1 k8s_images]# kubeadm token list

TOKEN TTL EXPIRES USAGES DESCRIPTION EXTRA GROUPS

7529d9.235a9be16773cad7 21h 2018-01-21T16:00:46+08:00 authentication,signing The default bootstrap token generated by 'kubeadm init'. system:bootstrappers:kubeadm:default-node-token按照上面提示,此时还不能用kubectl 控制集群。需要配置环境变量

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/configkubectl version测试

[root@huaicong-1 k8s_images]# kubectl version Client Version: version.Info{Major:"1", Minor:"9", GitVersion:"v1.9.0", GitCommit:"925c127ec6b946659ad0fd596fa959be43f0cc05", GitTreeState:"clean", BuildDate:"2017-12-15T21:07:38Z", GoVersion:"go1.9.2", Compiler:"gc", Platform:"linux/amd64"}

Server Version: version.Info{Major:"1", Minor:"9", GitVersion:"v1.9.0", GitCommit:"925c127ec6b946659ad0fd596fa959be43f0cc05", GitTreeState:"clean", BuildDate:"2017-12-15T20:55:30Z", GoVersion:"go1.9.2", Compiler:"gc", Platform:"linux/amd64"}

安装网络,可用使用flannel、macvlan、calico、weave,这里我 们使用flannel。

#下载此文件

wget https://raw.githubusercontent.com/coreos/flannel/v0.9.1/Documentation/kube-flannel.yml或直接使用离线包里面的

若要修改网段,需要kubeadm –pod-network-cidr=和这里同步,修改network项。

vim kube-flannel.yml net-conf.json: | 64 { 65 "Network": "10.244.0.0/16", 66 "Backend": { 67 "Type": "vxlan" 68 } 69 }执行

kubectl create -f kube-flannel.yml

查看所pod状态,已经都running

[root@huaicong-1 k8s_images]# kubectl get pod --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system etcd-huaicong-1.novalocal 1/1 Running 0 2h

kube-system kube-apiserver-huaicong-1.novalocal 1/1 Running 0 2h

kube-system kube-controller-manager-huaicong-1.novalocal 1/1 Running 0 2h

kube-system kube-dns-6f4fd4bdf-j9gd4 3/3 Running 0 2h

kube-system kube-flannel-ds-jb66l 1/1 Running 0 25s

kube-system kube-proxy-clhpp 1/1 Running 0 2h

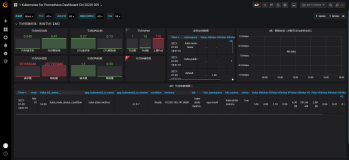

kube-system kube-scheduler-huaicong-1.novalocal 1/1 Running 0 2h部署kubernetes-dashboard

kubernetes-dashboard是可选组件,因为,实在不好用,功能太弱了。

建议在部署master时一起把kubernetes-dashboard一起部署了,不然在node节点加入集群后,kubernetes-dashboard会被kube-scheduler调度node节点上,这样根kube-apiserver通信需要额外配置。

下载kubernetes-dashboard的配置文件或直接使用离线包里面的kubernetes-dashboard.yaml

[root@huaicong-1 k8s_images]# kubectl create -f kubernetes-dashboard.yaml

[root@huaicong-1 k8s_images]# kubectl get pod --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system etcd-huaicong-1.novalocal 1/1 Running 0 2h

kube-system kube-apiserver-huaicong-1.novalocal 1/1 Running 0 2h

kube-system kube-controller-manager-huaicong-1.novalocal 1/1 Running 0 2h

kube-system kube-dns-6f4fd4bdf-j9gd4 3/3 Running 0 2h

kube-system kube-flannel-ds-jb66l 1/1 Running 0 25m

kube-system kube-proxy-clhpp 1/1 Running 0 2h

kube-system kube-scheduler-huaicong-1.novalocal 1/1 Running 0 2h

kube-system kubernetes-dashboard-58f5cb49c-m4f5z 1/1 Running 0 27snode节点操作

修改kubelet配置文件根上面有一将cgroup的driver由systemd改为cgroupfs

vim /etc/systemd/system/kubelet.service.d/10-kubeadm.conf

Environment=”KUBELET_CGROUP_ARGS=–cgroup-driver=cgroupfs”systemctl daemon-reload systemctl enable kubelet&&systemctl restart kubelet 使用刚刚执行kubeadm后的kubeadm join –xxx

kubeadm join --token 6c5a50.4fc04fd2f05054ed 192.168.111.123:6443 --discovery-token-ca-cert-hashsha256:fb12670b0c600f310f6d18dae6b19ce7145069afd613dea46bf15be6611306e4在master节点上check一下

[root@huaicong-2 ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION huaicong-2.novalocal Ready master 14h v1.9.0 huaicong-3.novalocal Ready <none> 25s v1.9.0 测试集群

在master节点上发起个创建应用请求

这里我们创建个名为httpd-app的应用,镜像为httpd,有两个副本pod

kubectl run httpd-app --image=httpd --replicas=2 [root@huaicong-2 ~]# kubectl get deployment

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE

httpd-app 2 2 2 2 13m

[root@huaicong-2 ~]# [root@huaicong-2 ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE

httpd-app-5fbccd7c6c-bbl2c 1/1 Running 0 14m 10.244.1.3 huaicong-3.novalocal

httpd-app-5fbccd7c6c-ksw9b 1/1 Running 0 14m 10.244.1.4 huaicong-3.novalocal

[root@huaicong-2 ~]# 因为创建的资源不是service所以不会调用kube-proxy

直接访问测试

[root@huaicong-2 ~]# curl http://10.244.1.3

<html><body><h1>It works!</h1></body></html>

[root@huaicong-2 ~]# curl http://10.244.1.4

<html><body><h1>It works!</h1></body></html>

[root@huaicong-2 ~]#

删除应用httpd-app

[root@huaicong-2 ~]# kubectl delete deployment httpd-app

[root@huaicong-2 ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

httpd-app-5fbccd7c6c-bbl2c 0/1 Terminating 0 56m

httpd-app-5fbccd7c6c-ksw9b 0/1 Terminating 0 56m至此kubernetes基本集群安装完成。

heapster插件部署

下面安装Heapster为集群添加使用统计和监控功能,为Dashboard添加仪表盘。 使用InfluxDB做为Heapster的后端存储,开始部署:

使用到的镜像

mkdir -p ~/k8s/heapster

cd ~/k8s/heapster

wget https://raw.githubusercontent.com/kubernetes/heapster/master/deploy/kube-config/influxdb/grafana.yaml

wget https://raw.githubusercontent.com/kubernetes/heapster/master/deploy/kube-config/rbac/heapster-rbac.yaml

wget https://raw.githubusercontent.com/kubernetes/heapster/master/deploy/kube-config/influxdb/heapster.yaml

wget https://raw.githubusercontent.com/kubernetes/heapster/master/deploy/kube-config/influxdb/influxdb.yaml

kubectl create -f ./

最后确认所有的pod都处于running状态,打开Dashboard,集群的使用统计会以仪表盘的形式显示出来

本涉及到安装的镜像

gcr.io/google_containers/kube-proxy-amd64:v1.9.0

gcr.io/google_containers/kube-apiserver-amd64:v1.9.0

gcr.io/google_containers/kube-controller-manager-amd64:v1.9.0

gcr.io/google_containers/kube-scheduler-amd64:v1.9.0

quay.io/coreos/flannel:v0.9.1-amd64

gcr.io/google_containers/k8s-dns-sidecar-amd64:1.14.7

gcr.io/google_containers/k8s-dns-kube-dns-amd64:1.14.7

gcr.io/google_containers/k8s-dns-dnsmasq-nanny-amd64:1.14.7

gcr.io/google_containers/etcd-amd64:3.1.10

gcr.io/google_containers/pause-amd64:3.0

gcr.io/google_containers/kubernetes-dashboard-amd64:v1.8.1

gcr.io/google_containers/heapster-influxdb-amd64:v1.3.3

gcr.io/google_containers/heapster-grafana-amd64:v4.4.3

gcr.io/google_containers/heapster-amd64:v1.4.2链接:https://pan.baidu.com/s/1ExMT... 密码:otpq

本文转自SegmentFault-离线部署kubernetes v1.9.0