1、介绍

elk是实时日志分析平台,主要是为开发和运维人员提供实时的日志分析,方便人员更好的了解系统状态和代码问题。

2、elk中的e(elasticsearch):

(2.1)先安装依赖包,官方文档说明使用java1.8

yum -y install java-1.8.0-openjdk

安装elasticsearch:

tar zvxf elasticsearch-1.7.0.tar.gz

mv elasticsearch-1.7.0 /usr/local/elasticsearch

vim /usr/local/elasticsearch/config

cp elasticsearch.yml elasticsearch.yml.bak

vim elasticsearch.yml(修改)

|

1

2

3

4

5

6

7

8

9

10

11

12

|

cluster.name: elasticsearch

node.name: syk

node.master:

true

node.data:

true

index.number_of_shards: 5

index.number_of_replicas: 1(分片副本)

path.data:

/usr/local/elasticsearch/data

path.conf:

/usr/local/elasticsearch/conf

path.work:

/usr/local/elasticsearch/work

path.plugins:

/usr/local/elasticsearch/plugins

path.logs:

/usr/local/elasticsearch/logs

bootstrap.mlockall:

true

(内存)

|

启动:/usr/local/elasticsearch/bin/elasticsearch -d

netstat -tlnp查看

会有9200与9300的java进程

curl http://192.168.137.50:9200

显示:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

|

{

"status"

: 200,

"name"

:

"syk"

,

"cluster_name"

:

"elasticsearch"

,

"version"

: {

"number"

:

"1.7.0"

,

"build_hash"

:

"929b9739cae115e73c346cb5f9a6f24ba735a743"

,

"build_timestamp"

:

"2015-07-16T14:31:07Z"

,

"build_snapshot"

:

false

,

"lucene_version"

:

"4.10.4"

},

"tagline"

:

"You Know, for Search"

}

|

(2.2)使用官方给的启动脚本:

https://codeload.github.com/elastic/elasticsearch-servicewrapper/zip/master

用rz命令传到服务器上

unzip elasticsearch-servicewrapper-master.zip

mv elasticsearch-servicewrapper-master/service/ /usr/local/elasticsearch/bin/

cd /usr/local/elasticsearch/bin/service

./elasticsearch install(在init.d下自动创建服务脚本)

/etc/init.d/elasticsearch restart

|

1

2

3

4

5

6

7

|

curl -XGET

'http://192.168.137.50:9200/_count?pretty'

-d '

> {

>

"query"

:{

>

"match_all"

:{}

> }

> }

> '

|

会返回:

|

1

2

3

4

5

6

7

8

|

{

"count"

: 0,

"_shards"

: {

"total"

: 0,

"successful"

: 0,

"failed"

: 0

}

}

|

(2.3)基于rest api的界面(可以增删改差)

安装插件:/usr/local/elasticsearch/bin/plugin -i elasticsearch/marvel/latest (自动安装)

网页访问:http://192.168.137.50:9200/_plugin/marvel

安装集群管理插件

/usr/local/elasticsearch/bin/plugin -i mobz/elasticsearch-head

或者:https://github.com/mobz/elasticsearch-head/archive/master.zip下载下来,rz传到服务器

unzip elasticsearch-head-master.zip

mv elasticsearch-head-master plugins/head

网页访问:http://192.168.137.50:9200/_plugin/head

可以以网页的方式显示你的分片已分片副本。

3、elk中的l(logstash):

(3.1)安装logstash:

i)、官方提供了yum安装的安装方式:

1、rpm --import https://packages.elastic.co/GPG-KEY-elasticsearch

2、vim /etc/yum.repos.d/logstash.repo

添加:

|

1

2

3

4

5

6

|

[logstash-2.3]

name=Logstash repository

for

2.3.x packages

baseurl=https:

//packages

.elastic.co

/logstash/2

.3

/centos

gpgcheck=1

gpgkey=https:

//packages

.elastic.co

/GPG-KEY-elasticsearch

enabled=1

|

3、yum --enablerepo=logstash-2.3 -y install logstash

ii)、下载tar包安装:

tar zvxf logstash-1.5.3.tar.gz

mv logstash-1.5.3 /usr/local/logstash

(3.2)测试

|

1

|

/usr/local/logstash/bin/logstash

-e

'input { stdin{} } output { stdout{codec => rubydebug} }'

|

输入hehe

显示:

|

1

2

3

4

5

6

7

8

|

Logstash startup completed

hehe

{

"message"

=>

"hehe"

,

"@version"

=>

"1"

,

"@timestamp"

=>

"2016-08-07T17:46:10.836Z"

,

"host"

=>

"web10.syk.com"

}

|

这表示正常。

(3.3)写logstash配置文件

注意:

必须input{}与output{}

写法:符号使用=>

vim /etc/logstash.conf

|

1

2

3

4

5

6

7

8

9

10

11

|

input{

file

{

path =>

"/var/log/syk.log"

}

}

output{

file

{

path =>

"/tmp/%{+YYYY-MM-dd}.syk.gz"

gzip

=>

true

}

}

|

启动logstash:/usr/local/logstash/bin/logstash -f /etc/logstash.conf

cd /var/log

cat maillog >> syk.log(追加到syk.log里)

在/tmp下可以看到以日期命名的syk.gz压缩文件

(3.4)使用redis存储logstash:

yum -y install redis(redis放在另外一台服务器上)

vim /etc/redis.conf(修改)

bind 192.168.137.52

在192.168.137.52服务器上也安装logstash

编写配置文件:

vim /etc/logstash.conf

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

|

input{

file

{

path =>

"/var/log/syk.log"

}

}

output{

redis {

data_type =>

"list"

key =>

"system-messages"

host =>

"192.168.137.52"

port =>

"6379"

db =>

"1"

}

}

|

启动52服务器的logstash:

/usr/local/logstash/bin/logstash -f /etc/logstash.conf

cd /var/log

cat maillog >> syk.log(追加到syk.log里)

进去redis里查看:

|

1

2

3

4

|

redis-cli -h 192.168.137.52 -p 6379

select

1

keys *(可以看到system-messages这个key)

llen system-messages(可以看大system-messages这个key的长度)

|

(3.4)将logstash收集的日志信息传到es上

在192.168.137.50的服务器上写logstash配置文件:

vim /etc/logstash.conf

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

|

input {

redis {

data_type =>

"list"

key =>

"system-messages"

host =>

"192.168.137.52"

port =>

"6379"

db =>

"1"

}

}

output {

elasticsearch {

host =>

"192.168.137.50"

protocol =>

"http"

index =>

"system-messages-%{+YYYY.MM.dd}"

}

}

|

启动logstash:

|

1

|

/usr/local/logstash/bin/logstash

-f

/etc/logstash

.conf

|

这时我们去看redis的LLEN system-messages,会发现已经变成了0,这说明数据已经传输到es上了。

网页访问:http://192.168.137.50:9200/_plugin/head/

会多出来一个system-messages-2016.08.07的分片副本

4、elk中的k(kibana):

(4.1)安装:

解压 mv就行

cd /usr/local/kiabna/config/

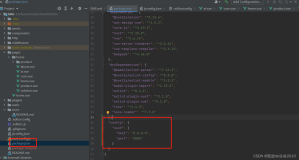

vim kibana.yml修改:

|

1

|

elastcsearch:

"http://192.168.137.50:9200"

|

启动:

|

1

|

nohup

.

/bin/kiban

&(默认端口5601)

|

网页访问:

相关操作需要配合图片说明,这里暂时不说了。

本文转自 sykmiao 51CTO博客,原文链接:http://blog.51cto.com/syklinux/1836732,如需转载请自行联系原作者