环境说明:

os版本:rhel5.7 64位

hadoop版本:hadoop-0.20.2

hbase版本:hbase-0.90.5

pig版本:pig-0.9.2

访问日志文件,下载文章中的附件!

日志放在本地目录路径为:/home/hadoop/access_log.txt

日志格式为:

220.181.108.151 - - [31/Jan/2012:00:02:32 +0800] "GET /home.php?mod=space&uid=158&do=album&view=me&from=space HTTP/1.1" 200 8784 "-" "Mozilla/5.0 (compatible; Baiduspider/2.0; +http://www.baidu.com/search/spider.html)"

1)在hdfs文件系统中创建input目录

grunt> mkdir input

grunt> ls

hdfs://node1.test.com:9000/user/hadoop/input <dir>

grunt> cd input

grunt> ls

grunt> pwd

hdfs://node1.test.com:9000/user/hadoop/input

2)把本地日志文件系统加载到hdfs系统当前目录的log.txt文件里面;

grunt> copyFromLocal /home/hadoop/access_log.txt log.txt

2014-10-14 10:53:49,667 [Thread-7] INFO org.apache.hadoop.hdfs.DFSClient - Exception in createBlockOutputStream java.net.NoRouteToHostException: No route to host

2014-10-14 10:53:49,667 [Thread-7] INFO org.apache.hadoop.hdfs.DFSClient - Abandoning block blk_-7546596643624545852_1118

2014-10-14 10:53:49,669 [Thread-7] INFO org.apache.hadoop.hdfs.DFSClient - Excluding datanode 172.16.41.154:50010

#查看相关文件

grunt> ls

hdfs://node1.test.com:9000/user/hadoop/input/log.txt<r 2> 7118627

3)加载文件内容到变量a中,分隔符为‘ ’;

grunt> a = load '/user/hadoop/input/log.txt'

>> using PigStorage(' ')

>> AS (ip,a1,a2,a3,a4,a5,a6,a7,a8);

4)对ip字段过滤

grunt> b = foreach a generate ip;

5)按ip对c进行group by操作:

grunt> c = group b by ip;

6)对ip点击次数进行统计:

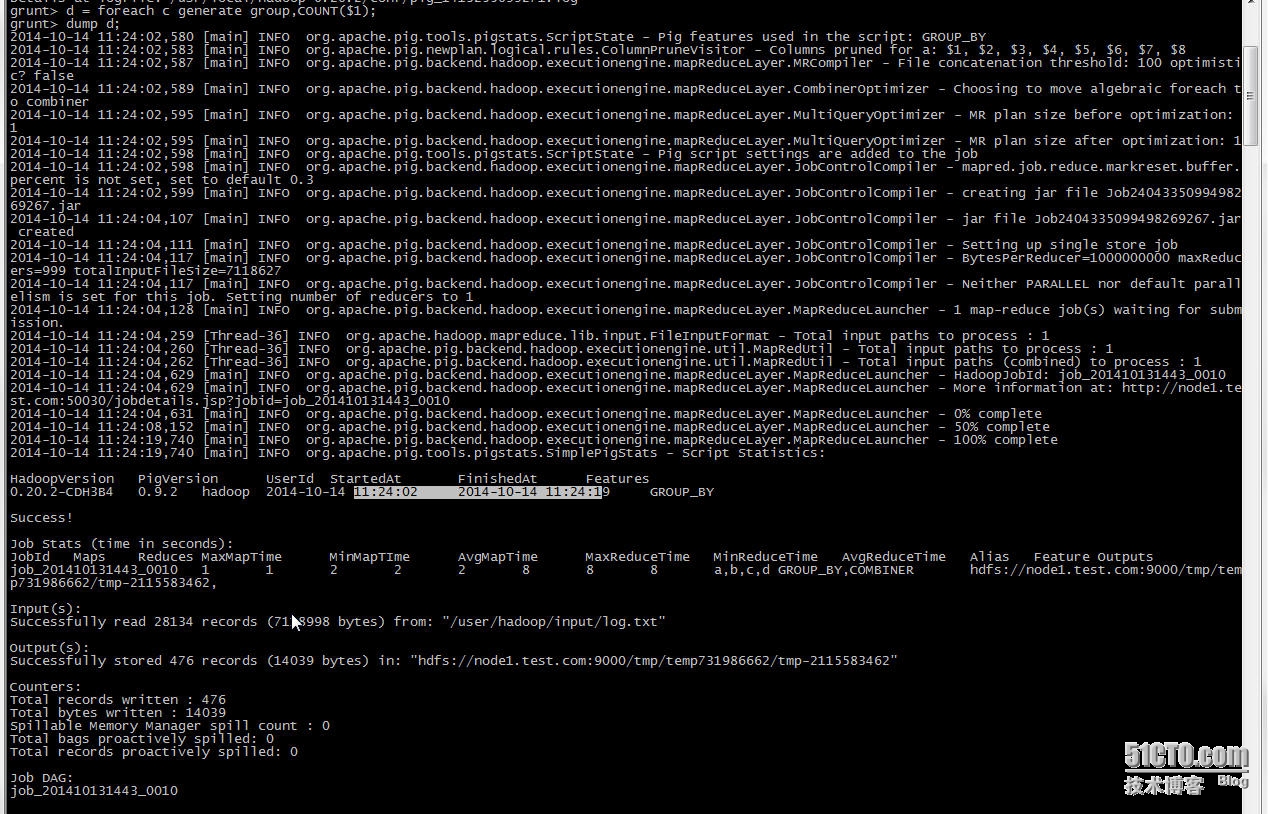

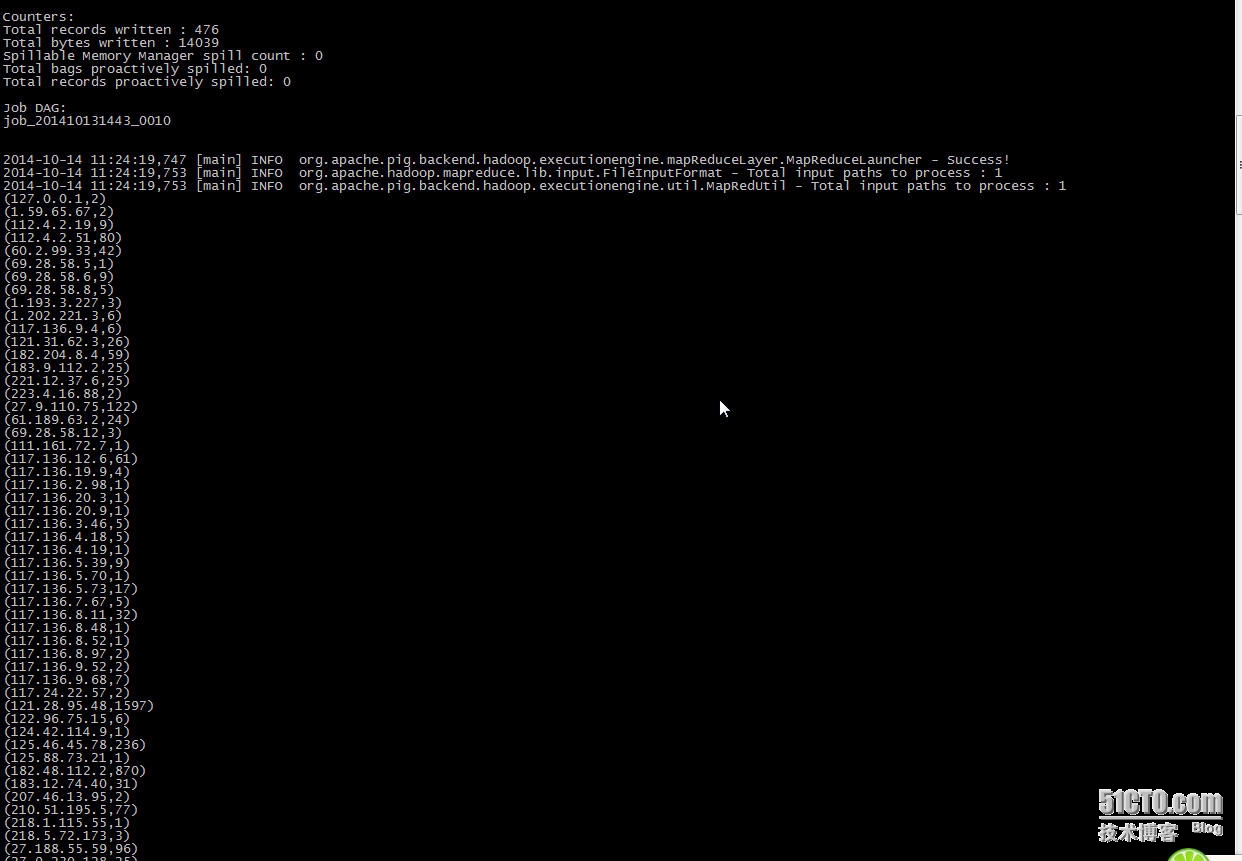

grunt> d = foreach c generate group,COUNT($1);

显示计算结果:

grunt> dump d;

附件:http://down.51cto.com/data/2364945

本文转自 shine_forever 51CTO博客,原文链接:http://blog.51cto.com/shineforever/1563850