ELK的架构原理:

logstash收集nginx日志,并对日志进行过滤拆分,并将处理后的结构化数据输出给elastcsearch,es对日志进行存储和索引构建,kibana提供图形界面及对es 查询api进行了封装,提供友好的查询和统计页面。

在生产环境中,logstash作为agent安装部署在任何想要收集日志的主机上,为了缓解多个agent对ES的输出压力,需要定义一个broker(redis)对日志进行输入缓冲,然后定义一个logstash server对broker中的日志统一读取并输出给ES集群。broker常常使用redis,为了broker的高可用,还可以对redis做集群部署。

单点安装测试只部署一个es,一个logstash agent,一个kibana,一个nginx。

安装测试流程:

1.安装nginx-1.12.0

#安装gcc等编译工具

sudo yum groupinstall -y '开发工具'

#安装nginx需要的pcre ,zlib开发库

yum install -y pcre-devel zlib-devel

#创建nginx的安装目录

mkdir nginx

#配置编译安装nginx

tar zxf nginx-1.12.0.tar.gz

cd nginx-1.12.0

./configure --prefix=/home/hoewon/nginx

make

make install

#简单配置nginx

user root

#运行

sudo nginx

2.安装logstash

#

tar zxf logstash-5.5.2.tar.gz

#对grok-pattern做连接

ln -s $LOGSTASH_HOME/ vendor/bundle/jruby/1.9/gems/logstash-patterns-core-4.1.1/patterns/grok-patterns/grok-patterns grok-patterns

#在grok-patterns追加nginx日志的模式,因为对http_x_forwarded_for 的匹配不好使,所以zhushidiaole

NGUSER %{NGUSERNAME}

NGINXACCESS %{IPORHOST:clientip} - %{NOTSPACE:remote_user} \[%{HTTPDATE:timestamp}\] \"(?:%{WORD:verb} %{NOTSPACE:request}(?: HTTP/%{NUMBER:httpversion})?|%{DATA:rawrequest})\" %{NUMBER:response} (?:%{NUMBER:bytes}|-) %{QS:referrer} %{QS:agent}

# %{NOTSPACE:http_x_forwarded_for}

#编辑logstash启动脚本

vim simple.conf

input {

file{

path => ["/home/hoewon/nginx/logs/access.log"]

type => "nginxlog"

start_position => "beginning"

}

}

filter{

grok{

match => {

"message" => "%{NGINXACCESS}"

}

}

}

output{

stdout{

codec => rubydebug

}

}

#检查运行配置文件

bin/logstash -t -f simple.conf

#运行logstash,并测试输出

bin/logstash -f simple.conf

输出如下

{

"request" => "/favicon.ico",

"agent" => "\"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/53.0.2785.104 Safari/537.36 Core/1.53.3368.400 QQBrowser/9.6.11974.400\"",

"verb" => "GET",

"message" => "192.168.247.1 - - [08/Sep/2017:15:25:46 +0800] \"GET /favicon.ico HTTP/1.1\" 403 571 \"-\" \"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/53.0.2785.104 Safari/537.36 Core/1.53.3368.400 QQBrowser/9.6.11974.400\"",

"type" => "nginxlog",

"remote_user" => "-",

"path" => "/home/hoewon/nginx/logs/access.log",

"referrer" => "\"-\"",

"@timestamp" => 2017-09-08T08:04:19.534Z,

"response" => "403",

"bytes" => "571",

"clientip" => "192.168.247.1",

"@version" => "1",

"host" => "kube01",

"httpversion" => "1.1",

"timestamp" => "08/Sep/2017:15:25:46 +0800"

}

测试输入输出无误的话,更改output插件为elsaticsearch

input {

file{

path => ["/home/hoewon/nginx/logs/access.log"]

type => "nginxlog"

start_position => "beginning"

}

}

filter{

grok{

match => {

"message" => "%{NGINXACCESS}"

}

}

}

output{

elasticsearch{

hosts => ["192.168.247.142:9200"]

index => "nginxlog"

}

}

3.安装Elasticsearch

#

tar zxf elasticsearch-5.5.2.tar.gz

#

sudo vim /etc/security/limits.conf

#<domain> <type> <item> <value>

hoewon soft nofile 65536

hoewon hard nofile 65536

hoewon soft nproc 2048

hoewon hard nproc 2048

#modify the vm.max_map_count

sudo vim /etc/sysctl.conf

vm.max_map_count=262144

#

sysctl -p

#vim $ES_HOME/conf/elasticsearch.conf

network.host: 192.168.247.142 (or 0.0.0.0)

http.port: port

#如果是集群修改如下配置,集群是通过cluster.name自动在9300端口上寻找节点信息的

node.name: nodename

cluster.name: clustername

#

$ES_HOME/bin/elasticsearch

4.安装kibana

#

tar zxf kibana-5.5.2-linux-x86_64.tar.gz

#

vim $KIBANA_HOME/conf/kibana.yml

server.host: "192.168.247.142"

elasticsearch.url: "http://192.168.247.142:9200"

#

$KIBANA_HOME/bin/kibana

测试:

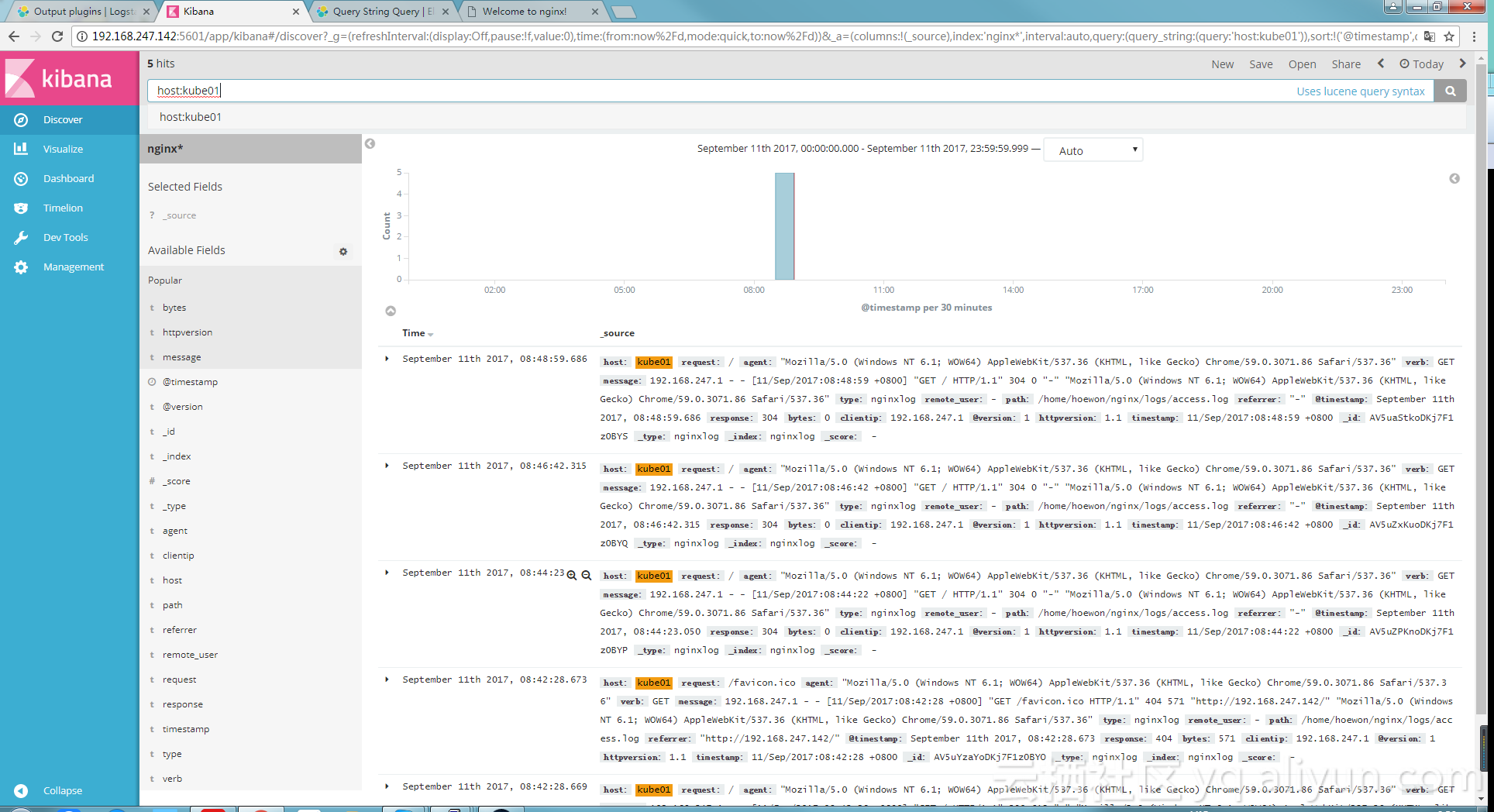

访问nginx所在主机80端口。logstash会自动收集日志,并输出给es,登录kibana所在主机:5601/,配置好es index的pattern,然后在discover中就可以查到文档信息。如下: